internet of things (IoT)

What is the internet of things (IoT)?

The internet of things, or IoT, is a network of interrelated devices that connect and exchange data with other IoT devices and the cloud. IoT devices are typically embedded with technology such as sensors and software and can include mechanical and digital machines and consumer objects.

Increasingly, organizations in a variety of industries are using IoT to operate more efficiently, deliver enhanced customer service, improve decision-making and increase the value of the business.

With IoT, data is transferable over a network without requiring human-to-human or human-to-computer interactions.

A thing in the internet of things can be a person with a heart monitor implant, a farm animal with a biochip transponder, an automobile that has built-in sensors to alert the driver when tire pressure is low, or any other natural or man-made object that can be assigned an Internet Protocol address and is able to transfer data over a network.

How does IoT work?

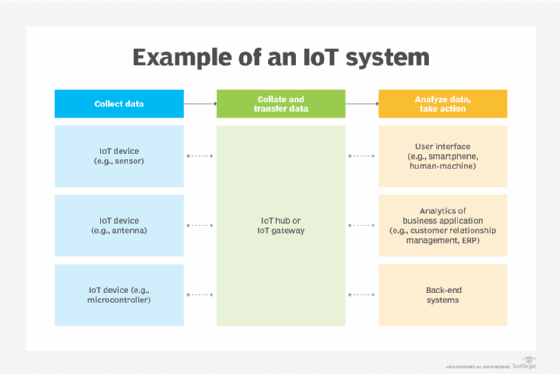

An IoT ecosystem consists of web-enabled smart devices that use embedded systems -- such as processors, sensors and communication hardware -- to collect, send and act on data they acquire from their environments.

This article is part of

Ultimate IoT implementation guide for businesses

IoT devices share the sensor data they collect by connecting to an IoT gateway, which acts as a central hub where IoT devices can send data. Before the data is shared, it can also be sent to an edge device where that data is analyzed locally. Analyzing data locally reduces the volume of data sent to the cloud, which minimizes bandwidth consumption.

Sometimes, these devices communicate with other related devices and act on the information they get from one another. The devices do most of the work without human intervention, although people can interact with the devices -- for example, to set them up, give them instructions or access the data.

The connectivity, networking and communication protocols used with these web-enabled devices largely depend on the specific IoT applications deployed.

IoT can also use artificial intelligence and machine learning to aid in making data collection processes easier and more dynamic.

Why is IoT important?

IoT helps people live and work smarter. Consumers, for example, can use IoT-embedded devices -- such as cars, smartwatches or thermostats -- to improve their lives. For example, when a person arrives home, their car could communicate with the garage to open the door; their thermostat could adjust to a preset temperature; and their lighting could be set to a lower intensity and color.

In addition to offering smart devices to automate homes, IoT is essential to business. It provides organizations with a real-time look into how their systems really work, delivering insights into everything from the performance of machines to supply chain and logistics operations.

IoT enables machines to complete tedious tasks without human intervention. Companies can automate processes, reduce labor costs, cut down on waste and improve service delivery. IoT helps make it less expensive to manufacture and deliver goods, and offers transparency into customer transactions.

IoT is one of the most important technologies and it continues to advance as more businesses realize the potential of connected devices to keep them competitive.

What are the benefits of IoT to organizations?

IoT offers several benefits to organizations. Some benefits are industry-specific and some are applicable across multiple industries. Common benefits for businesses include the following:

- Monitors overall business processes.

- Improves the customer experience.

- Saves time and money.

- Enhances employee productivity.

- Provides integration and adaptable business models.

- Enables better business decisions.

- Generates more revenue.

IoT encourages companies to rethink how they approach their businesses and gives them the tools to improve their business strategies.

Generally, IoT is most abundant in manufacturing, transportation and utility organizations that use sensors and other IoT devices; however, it also has use cases for organizations within the agriculture, infrastructure and home automation industries, leading some organizations toward digital transformation.

IoT can benefit farmers in agriculture by making their job easier. Sensors can collect data on rainfall, humidity, temperature and soil content and IoT can help automate farming techniques.

IoT can also help monitor operations surrounding infrastructure. Sensors, for example, can monitor events or changes within structural buildings, bridges and other infrastructure that could potentially compromise safety. This provides benefits such as improved incident management and response, reduced costs of operations and improved quality of service.

A home automation business can use IoT to monitor and manipulate mechanical and electrical systems in a building. On a broader scale, smart cities can help citizens reduce waste and energy consumption.

IoT touches every industry, including healthcare, finance, retail and manufacturing.

What are the pros and cons of IoT?

Some of the advantages of IoT include the following:

- Enables access to information from anywhere at any time on any device.

- Improves communication between connected electronic devices.

- Enables the transfer of data packets over a connected network, which can save time and money.

- Collects large amounts of data from multiple devices, aiding both users and manufacturers.

- Analyzes data at the edge, reducing the amount of data that needs to be sent to the cloud.

- Automates tasks to improve the quality of a business's services and reduces the need for human intervention.

- Enables healthcare patients to be cared for continually and more effectively.

Some disadvantages of IoT include the following:

- Increases the attack surface as the number of connected devices grows. As more information is shared between devices, the potential for a hacker to steal confidential information increases.

- Makes device management challenging as the number of IoT devices increases. Organizations might eventually have to deal with a massive number of IoT devices, and collecting and managing the data from all those devices could be challenging.

- Has the potential to corrupt other connected devices if there's a bug in the system.

- Increases compatibility issues between devices, as there's no international standard of compatibility for IoT. This makes it difficult for devices from different manufacturers to communicate with each other.

IoT standards and frameworks

Notable organizations that are involved in the development of IoT standards include the following:

- International Electrotechnical Commission.

- Institute of Electrical and Electronics Engineers (IEEE).

- Industrial Internet Consortium.

- Open Connectivity Foundation.

- Thread Group.

- Connectivity Standards Alliance.

Some examples of IoT standards include the following:

- IPv6 over Low-Power Wireless Personal Area Networks (6LoWPAN) is an open standard defined by the Internet Engineering Task Force (IETF). This standard enables any low-power radio to communicate to the internet, including 804.15.4, Bluetooth Low Energy and Z-Wave for home automation. In addition to home automation, this standard is also used in industrial monitoring and agriculture.

- Zigbee is a low-power, low-data rate wireless network used mainly in home and industrial settings. ZigBee is based on the IEEE 802.15.4 standard. The ZigBee Alliance created Dotdot, the universal language for IoT that enables smart objects to work securely on any network and understand each other.

- Data Distribution Service (DDS) was developed by the Object Management Group and is an industrial IoT (IIoT) standard for real-time, scalable and high-performance machine-to-machine (M2M) communication.

IoT standards often use specific protocols for device communication. A chosen protocol dictates how IoT device data is transmitted and received. Some example IoT protocols include the following:

- Constrained Application Protocol. CoAP is a protocol designed by the IETF that specifies how low-power, compute-constrained devices can operate in IoT.

- Advanced Message Queuing Protocol. The AMQP is an open source published standard for asynchronous messaging by wire. AMQP enables encrypted and interoperable messaging between organizations and applications. The protocol is used in client-server messaging and in IoT device management.

- Long-Range Wide Area Network (LoRaWAN). This protocol for WANs is designed to support huge networks, such as smart cities, with millions of low-power devices.

- MQ Telemetry Transport. MQTT is a lightweight protocol that's used for control and remote monitoring applications. It's suitable for devices with limited resources.

IoT frameworks include the following:

- Amazon Web Services (AWS) IoT is a cloud computing platform for IoT released by Amazon. This framework is designed to enable smart devices to easily connect and securely interact with the AWS cloud and other connected devices.

- Arm Mbed IoT is an open source platform to develop apps for IoT based on Arm microcontrollers. The goal of this IoT platform is to provide a scalable, connected and secure environment for IoT devices by integrating Mbed tools and services.

- Microsoft Azure IoT Suite platform is a set of services that let users interact with and receive data from their IoT devices, as well as perform various operations over data, such as multidimensional analysis, transformation and aggregation, and visualize those operations in a way that's suitable for business.

- Calvin is an open source IoT platform from Ericsson designed for building and managing distributed applications that let devices talk to each other. Calvin includes a development framework for application developers, as well as a runtime environment for handling the running application.

Consumer and enterprise IoT applications

There are numerous real-world applications of the internet of things, ranging from consumer IoT and enterprise IoT to manufacturing and IIoT. IoT applications span numerous verticals, including automotive, telecom and energy.

In the consumer segment, for example, smart homes that are equipped with smart thermostats, smart appliances and connected heating, lighting and electronic devices can be controlled remotely via computers and smartphones.

Wearable devices with sensors and software can collect and analyze user data, sending messages to other technologies about the users with the aim of making users' lives easier and more comfortable. Wearable devices are also used for public safety -- for example, by improving first responders' response times during emergencies by providing optimized routes to a location or by tracking construction workers' or firefighters' vital signs at life-threatening sites.

In healthcare, IoT gives providers the ability to monitor patients more closely using an analysis of the data that's generated. Hospitals often use IoT systems to complete tasks such as inventory management for both pharmaceuticals and medical instruments.

Smart buildings can, for instance, reduce energy costs using sensors that detect how many occupants are in a room. The temperature can adjust automatically -- for example, turning the air conditioner on if sensors detect a conference room is full or turning the heat down if everyone in the office has gone home.

In agriculture, IoT-based smart farming systems can help monitor light, temperature, humidity and soil moisture of crop fields using connected sensors. IoT is also instrumental in automating irrigation systems.

In a smart city, IoT sensors and deployments, such as smart streetlights and smart meters, can help alleviate traffic, conserve energy, monitor and address environmental concerns and improve sanitation.

IoT security and privacy issues

IoT connects billions of devices to the internet and involves the use of billions of data points, all of which must be secured. Due to its expanded attack surface, IoT security and IoT privacy are cited as major concerns.

One of the most notorious IoT attacks happened in 2016. The Mirai botnet infiltrated domain name server provider Dyn, resulting in major system outages for an extended period of time. Attackers gained access to the network by exploiting poorly secured IoT devices. This is one the largest distributed denial-of-service attacks ever seen and Mirai is still being developed today.

Because IoT devices are closely connected, a hacker can exploit one vulnerability to manipulate all the data, rendering it unusable. Manufacturers that don't update their devices regularly -- or at all -- leave them vulnerable to cybercriminals. Additionally, connected devices often ask users to input their personal information, including names, ages, addresses, phone numbers and even social media accounts -- information that's invaluable to hackers.

Hackers aren't the only threat to IoT; privacy is another major concern. For example, companies that make and distribute consumer IoT devices could use those devices to obtain and sell user personal data.

What is the history of IoT?

Kevin Ashton, co-founder of the Auto-ID Center at the Massachusetts Institute of Technology (MIT), first mentioned the internet of things in a presentation he made in 1999 to Procter & Gamble (P&G). Wanting to bring radio frequency ID to the attention of P&G's senior management, Ashton called his presentation "Internet of Things" to incorporate the cool new trend of 1999: the internet. MIT professor Neil Gershenfeld's book, When Things Start to Think, also appeared in 1999. Although the book didn't use the exact term, it provided a clear vision of where IoT was headed.

IoT has evolved from the convergence of wireless technologies, microelectromechanical systems, microservices and the internet. This convergence helped tear down the silos between operational technology and information technology, enabling unstructured machine-generated data to be analyzed for insights to drive improvements.

Although Ashton's was the first mention of IoT, the idea of connected devices has been around since the 1970s, under the monikers embedded internet and pervasive computing.

The first internet appliance, for example, was a Coke machine at Carnegie Mellon University in the early 1980s. Using the web, programmers could check the status of the machine and determine whether there would be a cold drink awaiting them, should they decide to make the trip to the machine.

IoT evolved from M2M communication with machines connecting to each other via a network without human interaction. M2M refers to connecting a device to the cloud, managing it and collecting data.

Taking M2M to the next level, IoT is a sensor network of billions of smart devices that connect people, computer systems and other applications to collect and share data. As its foundation, M2M offers the connectivity that enables IoT.

IoT is also a natural extension of supervisory control and data acquisition (SCADA), a category of software application programs for process control, the gathering of data in real time from remote locations to control equipment and conditions. SCADA systems include hardware and software components. The hardware gathers and feeds data into a desktop computer that has SCADA software installed, where it's then processed and presented in a timely manner. Late-generation SCADA systems developed into first-generation IoT systems.

The concept of the IoT ecosystem, however, didn't really come into its own until 2010 when, in part, the government of China said it would make IoT a strategic priority in its five-year plan.

Between 2010 and 2019, IoT evolved with broader consumer use. People increasingly used internet-connected devices, such as smartphones and smart TVs, which were all connected to one network and could communicate with each other.

In 2020, the number of IoT devices continued to grow along with cellular IoT, which now worked on 2G, 3G, 4G and 5G as well as LoRaWAN and long-term evolution for machines, or LTE-M.

In 2023, billions of internet-connected devices collect and share data for consumer and industry use. IoT has been an important aspect in the creation of digital twins -- which is a virtual representation of a real-world entity or process.

The physical connections between the entity and its twin are most often IoT sensors, and a well-configured IoT implementation is often a prerequisite for digital twins.

Likewise, IoT in healthcare has expanded in the use of wearables and in-home sensors that can remotely monitor a patient's health.

Learn about nine more current and potential future trends in IoT.